Fundamental characteristics of sound

If you are eager for creating sounds and wish to spend your time on it, create various audio environments in form of music, sound effects, audiovisual content, and all possible implementations of audio into life, desire to become a pro in sound design or sound engineer let’s review the foundational definition of what sound is. This knowledge is important for understanding the basics and learning the technique of working with audio. As a sculptor of this special environment you have to know your material and it is characteristic to be able to manipulate it better and give your idea the desired shape.

In the broadest sense sound is a pressure wave that is created by a vibrating object and spread in some medium.

Vibration waves travel through the air or any medium (solid, liquid or gas) creating a mechanical vibration of its particles.

In a more subjective sense sound is the perception of these vibrations by the special senses of animals or humans. The variety of sounds we hear are provided by various characteristics of a sound wave it travels in and combinations of these parameters.

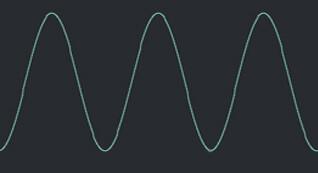

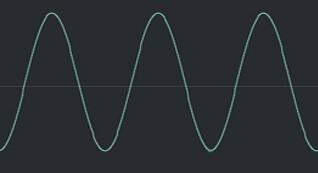

Show these core characteristics of sound below on an example of a simple Sin wave:

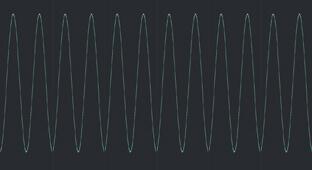

1. Frequency, that appears for human ears as a low or high sound

Low frequency (Bass)

Hi frequency (Cheep)

Wave can produce many cycles in one second, number of these cycles is called the frequency of the wave. As the wave is produced by vibrating body so vibrating body has equal characteristic – frequency. The frequency of the sound wave is measured in hertz.

The same is said for a vibrating body. One complete vibration produces one complete cycle of wave. If per second 50 vibrations are produced we can say that frequency of a wave is 50 Hz that is 50 cycles per second. That is 1 Hz is equal to 1 vibration per time.

Interesting fact that the passing of a wave through different substances does not affect and change the wave frequency, it is stay fixed.

The frequency of a wave is the same as the frequency of the vibrating body which produces the wave. When vibrations are fast a bigger unit of frequency is known as kilohertz (kHz) that is 1 kHz = 1000 Hz. Dealing with audio we mainly deal with a range of frequencies and variations that sound simultaneously creating nature of sound different tones, harmonics.

A healthy human ear can percept a sound in a range from 16 Hz to 20 kHz. Sound with a frequency below 20 Hz is called infrasound, and with frequency, above the level of 20 kHz normal human’s ear can perceive is called ultrasound.

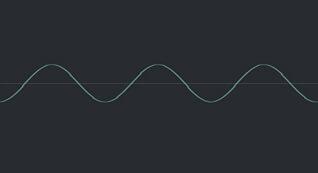

2. Amplitude is another characteristic of a sound wave, for human ears show up in sound as a Volume. When the frequency reflects how fast the waves are, the amplitude is how high (or low) the waves are. In daily life, the volume of a sound is measured in units called decibels (dB). The higher the decibel level, the louder the noise.

Low amplitude (Low volume)

High amplitude ( High volume)

Duration of sound is the time that sounds last from some start volume level to 0 dB. So actually is an amplitudes sequence of any sound from starting point to 0.

4. Timbre is a peculiarity of a sound itself, is a quality of sound. This is what makes two different musical instruments sound different from each other, even when each instrument plays the same musical note, at the exact same volume. Under “Playing the same note” we mean instruments have the same pitch (frequency) and loudness. Mentioning the frequency again must add, that frequency determinates the tone (pitch), a note the sound is played.

When the note is played on any instrument we can hear the main tone and other subtle tones that play on other pitches, these are harmonics. Lower in pitch than the main one are called sub tones, and higher in pitch are called overtones. The sum of all those frequencies is perceived by our ears as a sound of a particular instrument.

When it comes to sound synthesis where sound synthesis is the technique of generating desirable sound from scratch, necessary to get familiar with the most common waveform shapes. Yes, sound waves can take various shapes and since sound is a product of a wave, the waveform shape dictates the color, texture, and harmonic of the produced sound. And the process of audio synthesis is based on giving a wave particular shapes, blending it, or running through envelopes, filters, and effects. It can be a completely new sound that the designer or music producer imagined or any sound he wants to reproduce. For this purpose, electronic hardware or software can be used. In Amped Studio you have a choice of online synthesizers to experiment with, play, and create your unique sounds.

1. Sin wave

The Sin is the most simple and basic the purest waveform, it only occupies one frequency. All waveforms are built from it.

Sometimes it happens that some subtractive synth does not contain it as a basic waveform, because it only occupies one frequency, and does not fit a subtractive condition it doesn’t have anything to subtract from. The Sin wave type can easily be created by low passing a triangle wave.

2.Triangle wave

It sounds very similar to a sine, except that it has a bit more additional frequencies above it. The frequencies contain only odd harmonics, the same you can find in the square wave. That means they have the root note, 3rd harmonic, 5th harmonic, 7th harmonic, and so on. These harmonics “taper off” as you get further away from the root frequency. But the difference between a triangle wave and a square wave is that the harmonics drop off faster than that of a square wave.

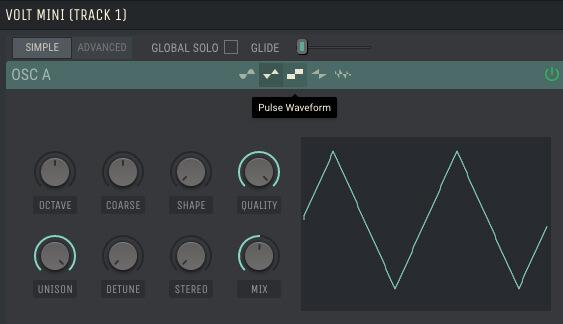

3. Square wave

Also called Pulse waveform as they can be controlled by something called Pulse Width Modulation. Pulse Width Modulation (or PWM) controls the spacing of the “squares”.

They’re similar to a triangle wave. They’re made and contain only every odd harmonic (3rd, 5th, 7th, etc). but with the higher harmonics that lasts much longer than that of a triangle.

4.Saw wave

As you can see from the picture it partially looks similar to a square wave, but it’s made with both even and odd harmonics. Because it’s rich in harmonics saw wave is the most common waveform widely used. Lots of sounds are made based on that waveform.

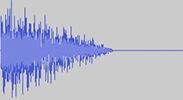

1. Noise wave

Everyone heard a sound of a TV or a radio that hasn’t been tuned it sounds like “Shshshsh”. That is exactly how the noise waveform sounds. It is so because it is many completely random frequencies are spread across the entire. Noise is widely used by sound designers in making anything from Claps, Sweeps, Hi-Hats, adding top-end to synths, and loads more.

As we always mention it is important to experiment. You can use any waveform to make any type of sound, depending on your idea, and audio image. And the general usage can be as follows:

- Lead: Square, Saw;

- Pad: Square, Saw;

- Basses: Triangle, Square, Saw;

- Sub-Bass: Sine, Triangle.

A fundamental truth about sound is that there are only 2 variables: time and displacement of particles. We can create any sound imaginable by simply displacing air molecules by the right amount at the right time. Synthesizer software uses proper math methods to produce the right displacement at the right time to give us both the harmonics associated with certain waveforms and the additional waves needed to form chords.

Describing a sound and dealing with synthesis is also essential to review such properties of the waveform as Phase Envelope (ADSR).

Phase

As mentioned above audio waveforms are cyclical that is, they proceed through regular cycles or repetitions. Phase is the amount of offset applied to a wave, measured in degrees, and is defined as how far along its cycle a given waveform is.

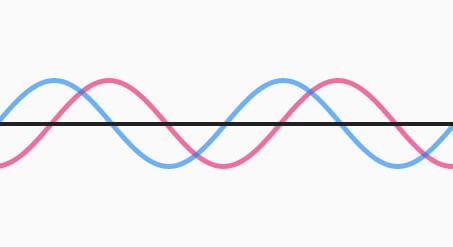

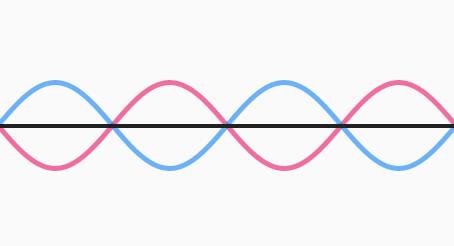

When mixing two waveforms, If these waveforms are “out of phase”, or delayed concerning one another, there will be some cancellation in the resulting audio. How much cancellation, and which frequencies it occurs at depends on the waveforms involved, and how far out of phase they are (two identical waveforms, 180 degrees out of phase, will cancel completely).

Waveforms of the same Sin shape 90 degrees out of phase the resulting volume will be canceled 50%.

Waveforms of the same sin shape 180 degrees out of phase, as result we will hear no sound, the volume will be canceled completely.

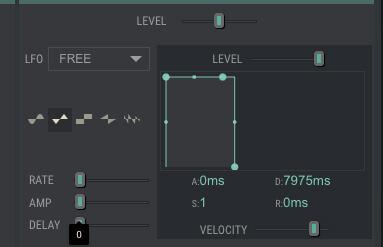

The envelope is set how a sound behaves over time. It includes 4 separate characteristics of a sound (ADSR).

ADSR stands for Attack, Decay, Sustain, and Release. These parameters of the (ADSR) envelope let us control the amplitude of our waveform over time.

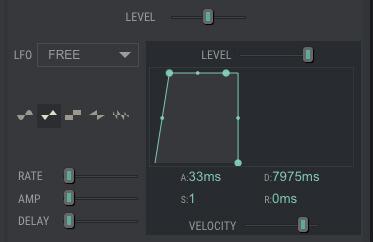

If you use a VOLT synthesizer of Amped Studio there you can see an Envelope section that allows you to manipulate ADSR parameters and synthesize your custom sound. Let’s have a brief look at it.

A – Attack

When push any notes on the synthesizer, the first stage that is trigged is the Attack. The Attack section visualize for us how long time it takes for a sound to reach its peak volume level when a key is pressed.

Here is an example of setting a sound with a short attack. When you press the key you instantly hear the sound, that means the attack is at 0.

Next is an example of a long attack. When press a key it’ll take 33ms for the sound to reach its maximum loudness. As the attack is set to 33ms.

The next three properties Decay , Sustain, Release get triggered the same way and marked on the synthesizer interface as a D, S, R respectively.

D-Decay

When the Attack section stage is complete next is the Decay. On a Decay section sound volume decrease to the level of Sustain during some period, so actually, decay is responsible for the length of decrease.

S-Sustain

The level on which sound will remain while pressing and holding a key after it passes Decay stage.

R-Release

Release is the time it takes for the volume of sound to decrease to complete silence. The final stage in Envelope. This stage gets active when we release the key.

Release comes from sustaining; if there is no sustain, there is no release.By controlling the attack, decay, sustain, and release of a waveform, you can truly change its timbre.

Here is a more detailed review of VOLT online synth, convenient and effective in use to realize your audio ideas.

In Closing

A sound is a form of energy and information. Working with sound is subtly working with thin energy. These vibrations are essential in human beings. Right sound to the right person at the right time can be a very powerful communication tool and audio communication is sometimes more effective than visual communication. Sound knowledge is fundamental for musicians, sound designers, audio engineers.

Start creating beats and songs in minutes. No experience needed — it's that easy.

Get started